Auto Assign Reviewers

Automatically match papers with reviewers using Smart Matching

Auto Assign uses Smart Matching to find the best reviewers for each paper. It scores reviewer-paper compatibility using text similarity and keyword overlap, then finds the globally optimal assignment across all papers simultaneously.

Getting Started

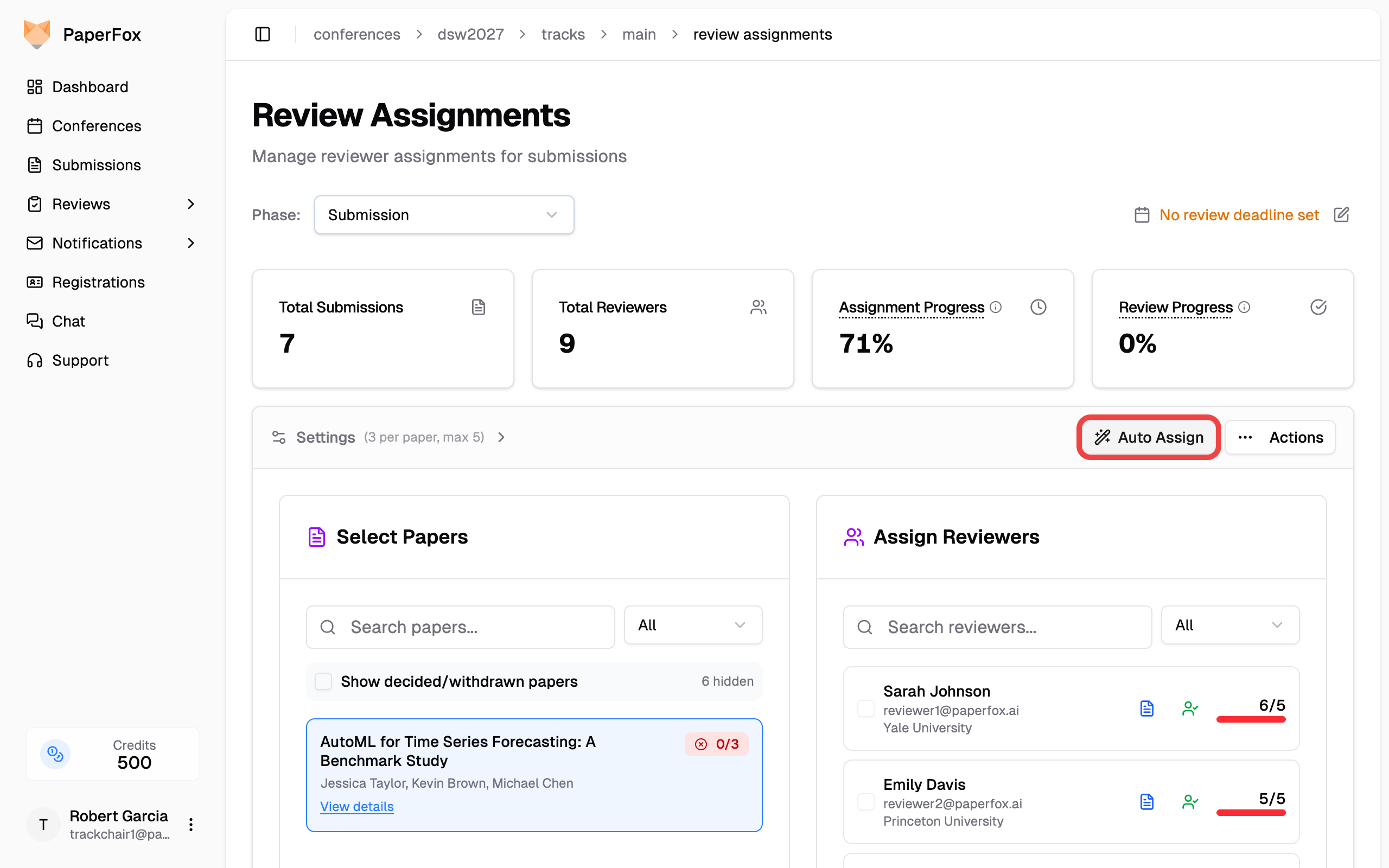

- Go to Submissions → Assignments for your track

- Click "Auto Assign" in the toolbar

This opens the auto-assignment page where you configure matching settings.

Settings

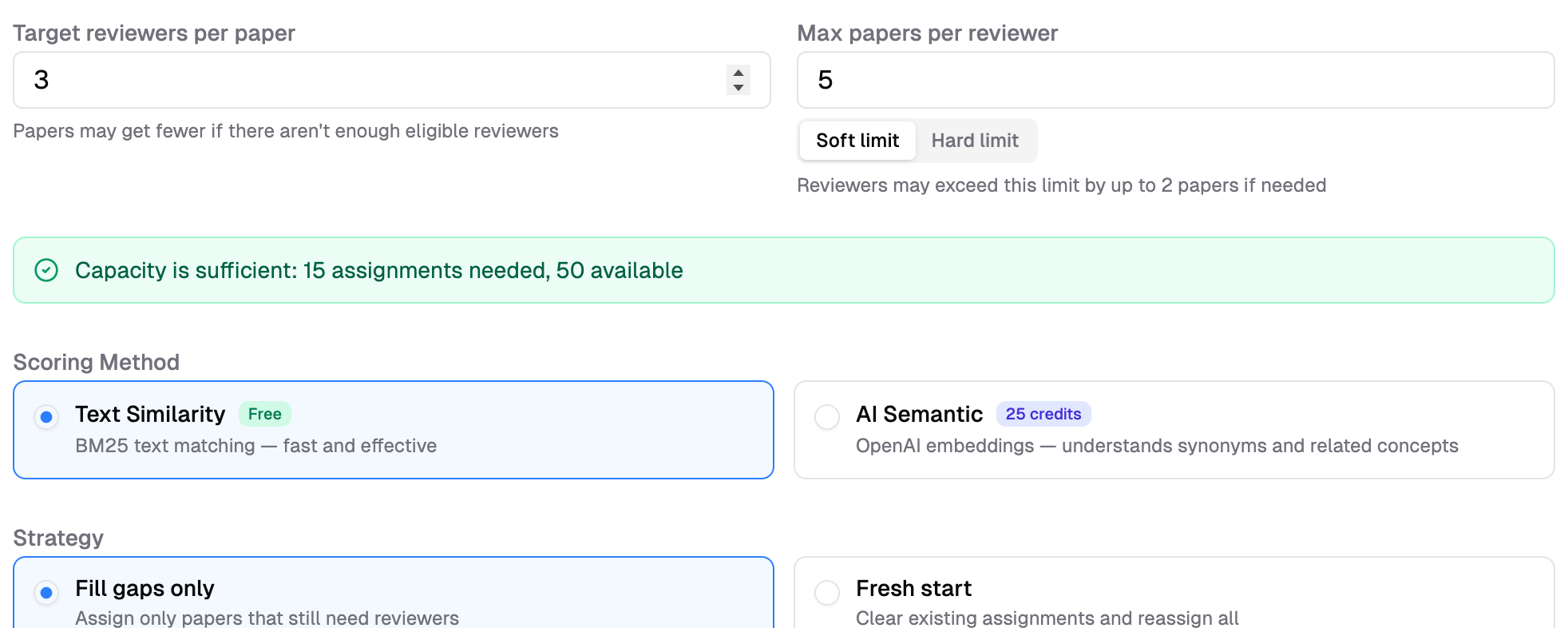

Target Reviewers per Paper

How many reviewers to assign to each paper. Default: 3. This is a target — papers may receive fewer reviewers if there aren't enough eligible reviewers available (due to COI filtering or capacity limits).

Max Papers per Reviewer

Upper limit on papers assigned to a single reviewer. Default: 5. Use the toggle to choose how strictly this limit is enforced:

| Mode | Behavior |

|---|---|

| Soft limit (default) | Reviewers may exceed the limit by up to 2 papers if needed to ensure all papers get assigned |

| Hard limit | Strict cap — no reviewer will exceed this number, even if some papers get fewer reviewers as a result |

Use Soft limit when full coverage matters most — you want every paper to get reviewers, even if a few reviewers go slightly over capacity. Use Hard limit when reviewer workload is the priority — no one should be overloaded, even if it means some papers get fewer reviewers than the target.

Feasibility Indicator

Below the settings, a color-coded banner shows whether your current configuration is feasible:

- Green — Capacity is sufficient. All papers can be assigned the target number of reviewers.

- Amber — Capacity is tight. Soft mode will allow overflow to fit, but hard mode may leave gaps.

- Red — Insufficient capacity. Some papers will get fewer reviewers than the target. Consider adding more reviewers or increasing the max papers per reviewer.

Scoring Method

Choose how text similarity between papers and reviewer expertise is computed.

| Method | How It Works | Cost | Speed |

|---|---|---|---|

| Text Similarity | BM25 text matching against paper titles and abstracts | Free | Seconds |

| AI Semantic | OpenAI embeddings — understands synonyms and related concepts | Credits (based on paper count) | Seconds |

Both methods also use phrase-level keyword matching (comparing paper keywords with reviewer expertise tags) in addition to text similarity.

How scoring works

Each reviewer-paper pair gets a score from 0–100: up to 70 points from text similarity (BM25 or AI embeddings) and up to 30 points from keyword overlap. The system then uses network flow optimization (Min-Cost Max-Flow) to find the assignment that maximizes the total score across all papers — not just one paper at a time.

When to use AI Semantic

Select AI Semantic when you need the algorithm to understand that related concepts match — for example, "NLP" and "natural language processing", or "deep learning" and "neural networks". Paper titles, abstracts, and reviewer expertise tags are sent to OpenAI for embedding. Use Text Similarity instead if data privacy is a concern.

Strategy

Fill Gaps Only (Default)

Only assigns reviewers to papers that don't have enough reviewers yet. Existing assignments are preserved — no existing reviewer-paper pairs are changed.

Use when: You've already made some manual assignments and want to fill in the rest.

Fresh Start

Generates new assignments for all papers, replacing existing ones. When you apply the suggestions:

- Existing assignments without a submitted review are removed and replaced with the new suggestions

- Existing assignments with a submitted review are kept — the system never deletes work that reviewers have already completed

The confirmation dialog shows exactly how many assignments will be removed and how many will be kept before you apply.

Use when: Starting over or regenerating all assignments from scratch.

Options

COI Enforcement

COI is always enforced — reviewers who have conflicts with paper authors are automatically excluded before the matching algorithm runs. Three rules are checked: self-conflict, co-author conflict, and declared conflict.

See Conflict of Interest Enforcement for full details on COI rules and how to encourage reviewers to declare their conflicts.

Balance Workload

When enabled, the algorithm favors distributing papers evenly across reviewers, even if it means slightly lower expertise matches.

Use when: You want to avoid overloading some reviewers while others have few papers.

Reviewers Without Research Profiles

Reviewers who haven't completed their research profile cannot be matched by expertise. They will be assigned to fill remaining slots after all profiled reviewers are placed.

If you see a warning about reviewers without profiles:

- Go to the reviewers page

- Send reminder emails to reviewers without profiles

- Wait for profiles to be updated, then run auto-assign again

See Research Profiles for details on what reviewers should include.

Running Auto Assign

- Adjust Target reviewers per paper and Max papers per reviewer if needed

- Choose a constraint mode (Soft limit or Hard limit) — check the feasibility indicator to make sure capacity is sufficient

- Select a scoring method (Text Similarity or AI Semantic)

- Choose a strategy (Fill gaps only or Fresh start)

- Toggle options (Enforce COI, Balance workload)

- Click "Generate Assignments"

After generation completes, you'll see a suggestions page where you can:

- Review each proposed assignment

- See match scores and reasoning

- Accept or reject individual suggestions

- Apply accepted assignments in bulk

When applying, a confirmation dialog summarizes the impact — for Fill gaps, it confirms that existing assignments are preserved; for Fresh start, it shows how many existing assignments will be removed and how many with submitted reviews will be kept.

Tips for Best Results

- Ensure reviewers have profiles — The more complete the research profiles, the better the matching quality

- Use keywords on papers — Papers with topic keywords get better matches than those with only titles

- Start with Text Similarity — It's free and fast, so you can iterate quickly

- Try AI Semantic for final round — When you need the highest quality matches and have credits available

- Check feasibility first — If the indicator is red, adjust your settings before generating